Heuri: Of Instruments and Men

In my previous article, I wrote about a constructivist approach to mathematics—where the journey comes first, and making mistakes is actually part of the process, not a failure. I also mentioned machine learning as a way to speed up Heuri’s development. That’s a risky move, because if ML isn’t handled right, it easily becomes a black box that just feeds students answers and kills their autonomy. Since I’m designing Heuri solo, I’d love to hear your feedback—feel free to reach out.

In this article, I’ll explain why building an ethically sound AI platform isn’t just a philosophical debate, but the only way Heuri will actually work for students.

The Illusion of Certainty

To understand the problem with how AI is marketed today, imagine buying your first car. You are in doubt whether it’s worth it. Consider these two sellers:

Seller A

I’ll be honest with you: I saved this car just for you because I know you’re a good guy. Anyway, the car is perfect and is practically a steal: remember the discount I offered you and you only, for you being a good guy? This is your last chance to make one of the best life decisions! In the end, both your family and me will be happier!

Seller B

I’ll be honest with you: I know nothing about this car, it just arrived here yesterday. It looks good on the outside but we have no experience with it yet. Bring a specialist to check the car out.

Two things are likely: with Seller A, you might brush off the pressure, confidently thinking you’re immune to their manipulation. With Seller B, however, you’d probably appreciate the honesty—they admit what they don’t know, which gives you the space to make your own informed decision.

Buy a car, they said. Image source: PD Image Archive

Buy a car, they said. Image source: PD Image Archive

Even though we’re not usually buying cars every day, the thing is that we live in a world where omnipresent GenAI products are marketed using the strategy A. Even though such products use an immense corpus of linguistic material underneath, they don’t typically respond with “I don’t know” or “Are you sure about that?”.

The reason is clear: users would be using and engaging with GenAI products way less.1

Just Deal With It

Two major viewpoints emerge during rapid adoption of a technology. Defenders of technological determinism argue that adoption and discovery of new technology is inevitable and that it’s destined to shape us, irrespective of humans in the loop. We’ve seen and heard the same story over again for many centuries: printing press dulling memory, radio presented as a drug, automation of manufacturing leading to unemployment and economic collapse and, most recently, the Internet, endangering our beloved children. Do you think that the initial fear of technology is something that we need to endure or that there are ways of suppressing it?

Here is a device, whose voice is everywhere…

We may question the quality of its offering for our children, we may approve or deplore its entertainments and enchantments;

but we are powerless to shut it out…

it comes into our very homes and captures our children before our very eyes.

One of the ways people viewed the rise of radio in the past. Source: Chills and thrills: Does radio harm our children?

Media studies research suggests that determinism is the predominantly used narrative for interpreting new media. When we apply the determinism optics to GenAI, we get exactly the state-of-the-art fearmongering of big tech companies. For them, it’s not whether GenAI will change everything, they are already past this, hoping you are likewise; the only discussion left is when the cataclysm, prevented by GenAI product XYZ, happens. This controlled and engineered subversion leads to resentment, potentially further deepening the subjective loss of human agency.

Extra! Our benchmarks have proven you should forget any tools you’ve been using since now! Image source: PD Image Archive.

Extra! Our benchmarks have proven you should forget any tools you’ve been using since now! Image source: PD Image Archive.

Long-term impacts, which are not wise to ignore completely, are at the same time tough to predict and potentially overshadow short-term threats that are more likely to happen and already are happening, such as general availability and explainability of GenAI technologies. Such effects are watered down in favor of saving the world: Sam Altman, the CEO of OpenAI, even threatened the EU that if it puts too much regulation on AI (legitimate fear from Altman’s side, to be fair), they would stop offering their products in Europe.

Science shows GenAI is awesome!

To fuel this resentment further, the emergence of AGI—which we still don’t have a solid definition for—is predicted to happen in a few months to a random number of years, depending on the boldness of the specific FOMO-based marketing campaign you happen to encounter. Yet AI safety research from fall 2025 suggests that AI automation is still outperformed in more than 95% cases by humans. Of course, this does not imply that there will never be self-improving technology, cognitively as capable as a human being, but as of now, it feels to me we’re still quite far.

It is very easy to miss how biased (no pun intended) ML research is towards determinism, as most of ML research today is funded and incentivized by big tech companies. Another point to make is the irrational emphasis on scientific research, which is skewed by cyclic and political incentives. Furthermore, if GenAI companies are not certain whether the technology they develop is safe, and confidently predict it might be harmful to society, wouldn’t the only ethical conclusion be to stop offering such technology to the public until it’s safe?2

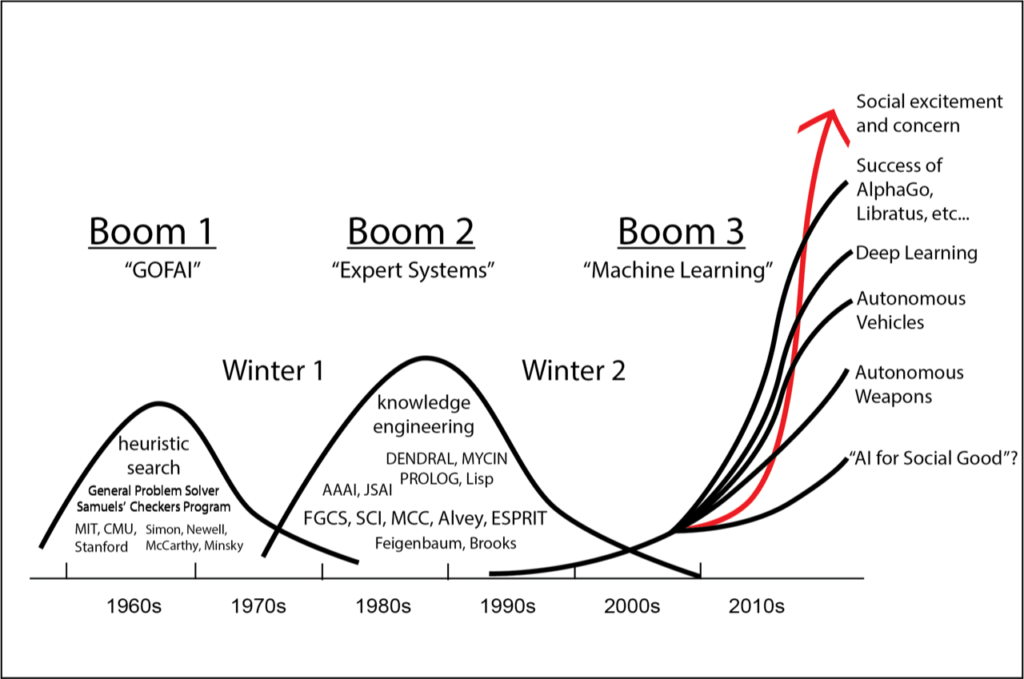

To put groundless exclamations such as “The AI bubble is not going to burst. The future. Is. Now.” into more context, it’s helpful to realize we’re currently at the peak of the third boom of artificial intelligence, first of which started after the World War II. It is not wise to predict future solely by history but there are certain indications from the past that you simply cannot keep the hype up for very long.

The boom of large language models is far from being the first AI boom. Image source: The Society for the History of Technology.

The boom of large language models is far from being the first AI boom. Image source: The Society for the History of Technology.

LLM = Calculator

One of the counterweight viewpoints to determinism is instrumentalism that treats technologies as neutral tools that a human has under full control. From this, it follows that the relation of a human with technology is determined by how people use the technology, as the technology itself has no agency.

So, let’s apply instrumentalism to GenAI to hopefully save us.

- Large language model is just a very smart statistical parrot whose job is to predict the next token.

- People do not know what they’re talking about if they assume a chatbot will ever be able to make any decisions. It has no self-awareness, moral compass, or sense of accountability.

- It is admittedly very intelligent, knowledgeable, and useful, but understands nothing and cannot reason.

- Creativity and human craft are what matter, and this is what GenAI is never capable of achieving.

- Humanity will prevail, as always, because we humans say so.

However, such a “subhumanizing” approach is also limiting and blinding, as it underestimates the true impact GenAI products can have. Neuroscience research suggests that using GenAI makes us more dependent on GenAI by relying too much on its confident outputs and sycophancy.

We also shouldn’t ignore the psychological effects these tools have on teenagers. Using a standard GenAI product can feel a lot like a slot machine. Sometimes the machine gives you gold; sometimes it’s confident rubbish, but rubbish nonetheless. But because it rewarded you once, you keep pulling the lever. If your prompting doesn’t work, it must be just a skill.md issue which many brogrammers are willing to help with. Let’s stop and ponder, though: overall, is using GenAI more useful to us than it is harmful? If technologies are just instruments, why is it that markets of attention—heavily based on hijacking human perception—are some of the most profitable markets today?

If someone tells you consistently and convincingly enough you should surrender, it might be hard not to. Image source: PD Image Archive.

If someone tells you consistently and convincingly enough you should surrender, it might be hard not to. Image source: PD Image Archive.

Synthesis

Before we all die in 2027, let’s take a step back.

In the early 2000s, a Dutch philosopher Peter-Paul Verbeek was one of the philosophers that have proposed a synthesis of both limping technologist philosophies: mediation. Mediation theory suggests that technology and humans are not separate entities acting upon each other; rather, they co-create our values. When we interact with and are surrounded by technology, we can think of these tools as artifacts that actively shape our perception and experience. For example, apart from contact lenses making you see better, they can boost your confidence, because you don’t have to wear glasses.

We can think of GenAI, then, as an artifact that mediates the way we solve problems and has a direct impact on our willingness to let GenAI solve tasks for us rather than with us. It’s tempting to view mediation as just a rephrasing of determinism: we acknowledge GenAI co-creates the problem-solving process, yet we still feel no agency over what that reality looks like.

But we do have agency. GenAI doesn’t have to be a massively expensive, centralized black box, and it doesn’t need to act like an all-knowing therapist to be useful. By moving to design that respects privacy and grants the user true structural control, we can build something that actually serves the student.

This shift in thinking is exactly why I’m embedding these ethical values directly into Heuri from day one. In the next article, I will propose those values. I am not the only one convinced, though, that similar values should be an innate part of any ethical ML product, not just an after-thought because users complained too much or the EU said so.

So, instead of viewing GenAI deterministically or instrumentally, let’s approach it in the way that’s most beneficial to us, users. Let’s enhance digital literacy, promote user empowerment and consider the varying contexts in which young people engage with online content, rather than simply relying on brittle regulations that cannot keep up with the progress of any technology.

-

To be fair, research has shown that this also has technical reasons, as training an LLM to output “I don’t know” would result in a language model answering “I don’t know” most of the time. ↩︎

-

A very common counterpoint to this argument is that we cannot improve the technology if it is not learning on large amounts of people. Firstly, we cannot verify that this is true because the aggressive gatekeeping of GenAI technology research and development prevents us from drawing a complete picture independently of those pushing the technology. Furthermore, it’s unethical. We don’t entrust pharmaceutical companies to test their experimental cures on the general public just to see what happens and how many die in the process; why should we make an exception to GenAI? ↩︎