GenAI Does Not Make Mistakes

Disclaimer: I base the following material on publicly available academic papers and research from 2023 through early 2026, together with my own experience of intensive usage of GenAI products for the past few years (namely ChatGPT, Perplexity, Claude Code and Gemini).

We’ve all been there

You’re going with a friend on a stroll in the nearest park. Since you haven’t seen each other for quite some time, you’re thrilled to see them—at least for the first five minutes, anyway. After a bit of mundane conversation—“Oh, wow! This is your twentieth photo of your baby/cat/bike, amazing! I think I haven’t seen this angle yet!”—it starts pouring down.

The prettiest cat in the world. Of course! Source.

The prettiest cat in the world. Of course! Source.

You both briskly make your way to the café that’s fortunately nearby. On your way, your friend suddenly shakes their fist at the sky saying: “Damn you weather, I wish you didn’t make such stupid mistakes!”

Weather Boys of the AI World

How does that even remotely relate to the title of this article? Even though it’s not usual to attribute the ability to make mistakes to the weather, behaviors in both worlds are in many aspects surprisingly similar, given enough distance: they are both quite tough to predict reliably and even tougher to take control over.

Even though the obligatory disclaimer “AI can make mistakes” sounds like a fair and transparent warning against the hallucinatory nature of generative AI (GenAI), I view it as a proxy of one of the many attempts of GenAI companies to blur the line between what feels “robotic” and “human”. In the case of weather, “making mistakes” would rarely make any sense; it gets tricky with systems that know unprecedentedly well how to manipulate natural language. Blurring this line isn’t inherently dangerous—the danger lies in how companies weaponize that ambiguity.

Before we go any further, let me state this as bluntly as possible: my intention is not to debate whether machines think, feel or have the ability to be creative. Tell you what: let’s assume they do all of this already better than us humans, for the sake of the argument. Even though such debates might be fruitful and eye-opening, too, I think it’s much more pragmatic to pose these questions:

What does using GenAI imply to us humans? How does it change the way we behave, solve problems, and our capability of self-sufficient thinking?

How can we shape and change the way we, humans, use such technology on such scale and with so much power?

Are the inevitable advances, marketed so aggressively by GenAI ambassadors, really that inevitable?

It’s easy to fall for the trap of the technological determinism. It’s an evergreen in human history. Illustration source.

It’s easy to fall for the trap of the technological determinism. It’s an evergreen in human history. Illustration source.

ELIZA, are you OK?

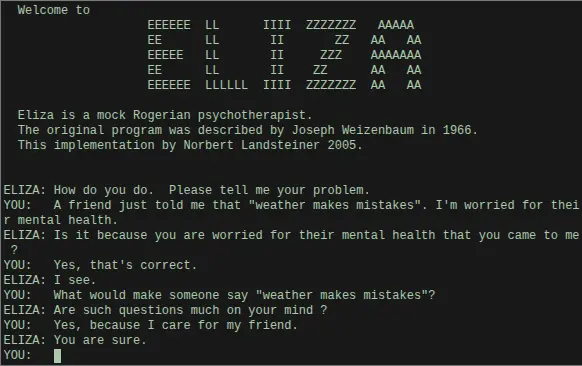

In 1966, an American computer scientist Joseph Weizenbaum created ELIZA, a pattern-matching program emulating a psychotherapist. Decades later, researchers coined the term ‘ELIZA Effect’, describing it as the “…persistent human tendency to attribute genuine understanding and intentionality to computational systems whose actual capabilities are far more limited than they appear.” 1

This phenomenon was demonstrated using ELIZA, a pattern-matching program emulating a psychotherapist.

ELIZA chatbot, devised by Joseph Weizenbaum in 1966. Source: author’s work, created using N. Landsteiner’s implementation.

ELIZA chatbot, devised by Joseph Weizenbaum in 1966. Source: author’s work, created using N. Landsteiner’s implementation.

Cognition and AI researchers have recently adopted the phenomenon of the ELIZA Effect to the world of machine learning. In essence, users apply a ‘cognitive glue’ to anything that speaks their language, projecting human traits onto a black-box technology regardless of its actual capabilities.1

Such tendencies seem to be widespread: psychological and neuroscientific studies have repeatedly shown that, even when fully aware of the fictional nature of a character, readers and viewers experience authentic emotional engagement, empathy, and identification, a process termed “narrative transportation” or “simulation of social worlds” . 1

DON’T PANIC, I’m just like you

Fast forward to 2026, the linguistic capabilities of Large language models (LLM) can feel both astonishing and frightening compared to pattern-matching systems like ELIZA. In my opinion, this is what makes all the advances of GenAI tricky: their easy interchangeability with parasocial interactions on the Internet. We no longer live in a world of amusing Nigerian princes offering us a million dollars in mangled English in spam mail campaigns; we are facing technology that manipulates language on an immensely sophisticated level, increasingly similar to, or even as capable as, humans.

Increasingly, it has been reported that users cannot tell the difference between human and LLM writing. Indeed, LLMs excel at persuasiveness and are sometimes even ascribed attributes of “empathy”. Research has also shown that anthropomorphism—attribution of human characteristics to non-human entities—is positively correlated with an increased trust in the system and willingness to disclose sensitive personal information.2

It also seems that the temptation to boost the human-like appeal of GenAI products is intentional and very common. Companies developing top-class GenAI products, such as Google, OpenAI or Anthropic deliberately use terminology in their products that purposely resemble human cognitive processes, such as “think”, “prove”, “reason”, etc., instead of the more domain-precise terms such as “infer” or “compute”. 2

Here is an excerpt from the Claude Code documentation that is very illustrative of this:

[…] Extended thinking is enabled by default, giving Claude space to reason through complex problems step-by-step before responding. […]. Additionally, Opus 4.6 introduces adaptive reasoning: instead of a fixed thinking token budget, the model dynamically allocates thinking based on your effort level setting. Extended thinking and adaptive reasoning work together to give you control over how deeply Claude reasons before responding.

https://code.claude.com/docs/en/common-workflows, highlighted by MŽE

Let me be clear: there is inherently nothing wrong with using anthropomorphist terminology which can ease the feel of discomfort and improve the overall trustworthiness. What I grapple with is that this comfort and enhanced feeling of “authenticity” and “human-like closeness” is hijacked for the purpose of surveillance capitalism.3

Resistance is futile!

It’s chilling how Alan Turing anticipated the possible dynamics more than 75 years ago4:

An important feature of a learning machine is that its teacher will often be very largely ignorant of quite what is going on inside, although he may still be able to some extent to predict his pupil’s behavior.

Since the outputs of GenAI to its users5—and, to some extent, even ML researchers—are practically outputs of a blackbox, it’s hard to link and reason what the origin is. This creates an explanatory gap, where fantasy, self-projections and fatalistic theories fill the space.6 The explanatory gap is twofold in nature: we do not know how (“Oh, what’s a Transformer, anyway?”) and not even why (“Why did ChatGPT give this as an output? It gave me a completely different answer just a few moments ago!”).

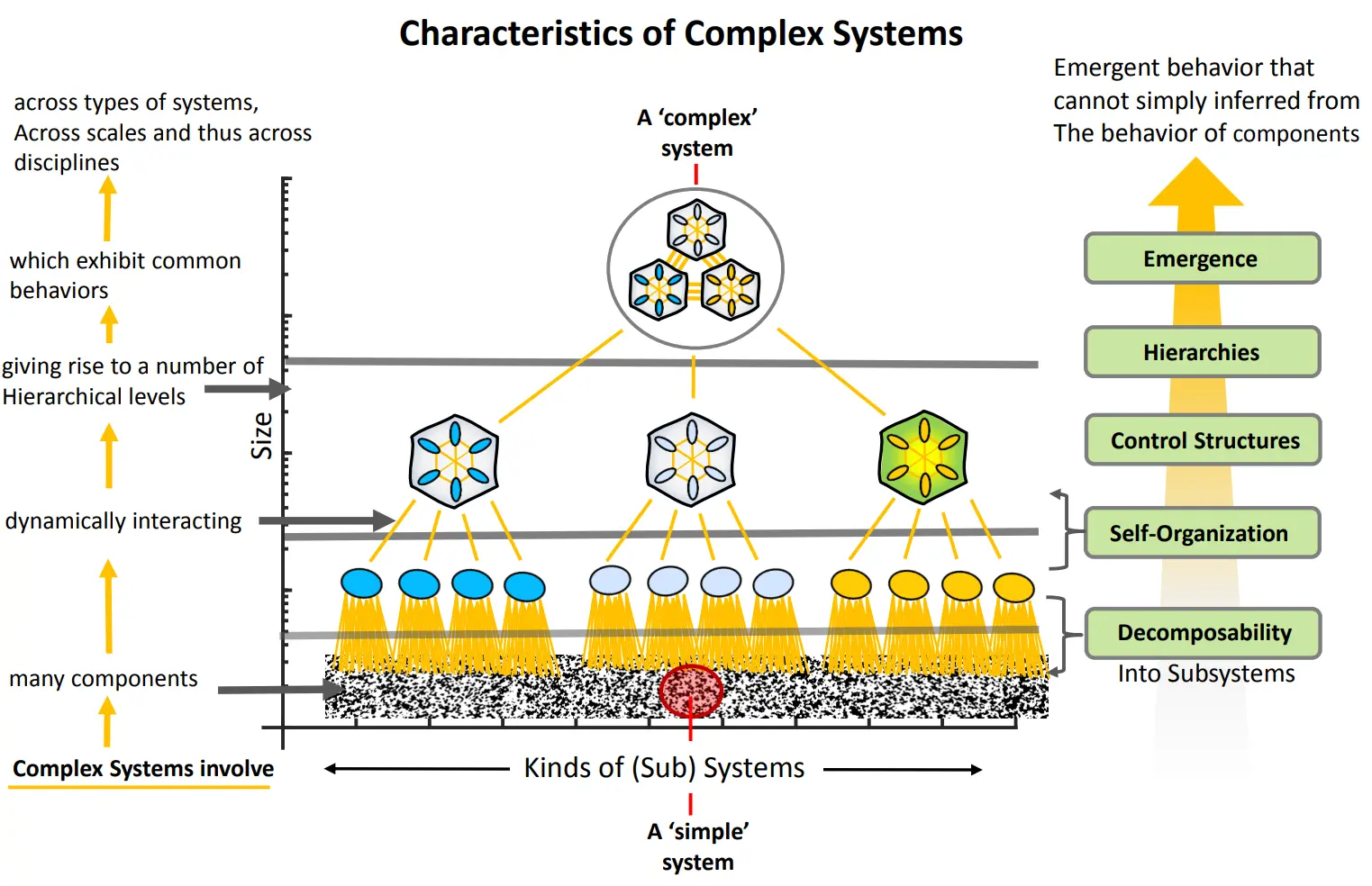

Modern large language models cannot be explained simply by decomposing them into individual moving parts.1

Modern large language models cannot be explained simply by decomposing them into individual moving parts.1

It’s easy to conceptualize what a phone does when you press the call button even if you’re not a mobile network engineer. It’s also quite manageable to learn (after a lot time, admittedly) how CPU architecture has evolved and become more sophisticated and complex. However, this is hardly the case when you hit enter after giving a prompt to ChatGPT, at least at the beginning of 2026. This knowledge gap is what GenAI executives can capitalize on, and for sure they do:

I believe we are entering a rite of passage, both turbulent and inevitable, which will test who we are as a species. Humanity is about to be handed almost unimaginable power, and it is deeply unclear whether our social, political, and technological systems possess the maturity to wield it.

Essay: “The Adolescence of Technology”, Dario Amodei, Anthropic CEO

The basic development of AI technology and many (not all) of its benefits seems inevitable (unless the risks derail everything) and is fundamentally driven by powerful market forces.

Essay: “Machines of Loving Grace” (October 2024), Dario Amodei

We’ll find new things to do. I have no worry about that… I think what’s gonna happen is this is just the next step in a long unfolding exponential curve of technological progress.

TED Talk interview with Adam Grant (April 2024), Sam Altman, OpenAI CEO

Apocalypse and the fascination about the uncertainty of the future seems to be a neverending source of human fascination. This Wikipedia page lists more than 100 predictions of the collapse of civilization. Illustration source.

Apocalypse and the fascination about the uncertainty of the future seems to be a neverending source of human fascination. This Wikipedia page lists more than 100 predictions of the collapse of civilization. Illustration source.

I am not a number! I am a free man!

When we’re interacting with GenAI, agency is not just perceived—it is co-constructed by its users. In other words, using GenAI products brings us comfort also because we project comfort onto them, irrespective of the objective capabilities and limitations of such technology. That doesn’t necessarily mean, though, that AI system owners need to exploit it and misuse it, e.g. by creating a GenAI product—not technology—that’s overly sycophantic or sometimes leads to AI psychosis7, a novel phenomenon during which users reportedly experience psychotic episodes as a result of using GenAI chatbots.

It would be naive to think that there is one universally applicable solution to designing ethical and transparent AI systems. At the same time, we should make full use of machine learning and its incredible advances that have happened recently.

Even though GenAI products are immensely powerful and are likely to shape the world around us, I propose to Make Machine Learning Boring Again©. While we’re discussing AI system autonomy, we’re losing our own: but not in favor of ChatGPT, Gemini or Claude Code, but OpenAI, Google and Anthropic — that’s the crucial distinction.

Instead of clinging to the next TED talk where we will learn how to “democratize the paradigm shift toward safe, human-centric AGI”, we can employ and develop our own ML systems whose data flows are fully under the control of the business and/or its customers. Precisely because the advances in ML have been so fundamental for the past few years, it’s possible—not only that, rather advisable—to cut off from this incredibly luring voice of the GenAI marketing sirens and go back to building robust systems that can actually help us with making fewer mistakes.

References

-

Enrico, D. S., & Rizzi, A. (2025). Noosemia: toward a Cognitive and Phenomenological Account of Intentionality Attribution in Human-Generative AI Interaction. arXiv (Cornell University). https://doi.org/10.48550/arxiv.2508.02622 ↩︎ ↩︎2 ↩︎3 ↩︎4

-

S. Peter, K. Riemer, & J.D. West, The benefits and dangers of anthropomorphic conversational agents, Proc. Natl. Acad. Sci. U.S.A. 122 (22) e2415898122, https://doi.org/10.1073/pnas.2415898122 (2025). ↩︎ ↩︎2

-

Surveillance capitalism is a term coined by Shoshana Zuboff, an American scholar and writer. It refers to practices of tech companies that capitalize on human experience without their consent. There is no reason to believe that surveillance capitalists like Google don’t employ and design GenAI products in the same way. ↩︎

-

A. M. TURING, I.—COMPUTING MACHINERY AND INTELLIGENCE, Mind, Volume LIX, Issue 236, October 1950, Pages 433–460, https://doi.org/10.1093/mind/LIX.236.433 ↩︎

-

It’s tricky to say who is the end user of GenAI products, at least in 2026. Will it be marketing companies? Politicians? One way or another, what I mean by

userhere are regular users prompting ChatGPT how to “throw a creative baby shower party if I’m expecting quadruplets” or “please, pretty please, find me all the facts that will convince my friend that Trump is stupid and a moron, make no mistakes”. ↩︎ -

A great example of this is AI 2027 at the time of its inception that had nothing to do with rationally predicting the future of generative AI as a technology, but is rather a geopolitical phantasmagoria, appealing to the fear of uncertainty of the future by predicting certain outcomes, thus filling the explanatory gap. ↩︎

-

https://www.psychologytoday.com/us/blog/urban-survival/202507/the-emerging-problem-of-ai-psychosis ↩︎