Heuri: Ethics II

This is a continuation of the previous article.

In this article, I will finish outlining what ethical values Heuri incorporates as an EdTech platform. The other two ethical concepts I’d like to focus on are transparency and student autonomy.

3. Transparency

For Heuri to work as intended, it needs to track user activity. Even though user tracking feels like a euphemism for Orwellian automated surveillance, I’m firmly convinced tracking users can be done ethically and with the user’s full control and awareness. The fact that this is often not reality is a separate issue entirely.

One of the ways to avoid using spyware like Google AdSense or Hotjar is to write user tracking in-house. From where I stand, it’s a bad idea for many reasons:

- Such user-tracking logic wouldn’t be battle-tested by others and would therefore incur maintenance and development costs.

- A user or an institution is likely to trust known spyware more than an arbitrary in-house tracking system.

- User tracking is most definitely not the core domain of Heuri.

- Just because we can do something in-house does not mean we should.

At the same time, however, full control over user data is a must, so the fewer magic black holes, the better. From a technical standpoint, there will be two types of telemetry: UI telemetry, which occurs on the frontend and is optional. Button clicks and such. The biggest caveat of UI telemetry is that it’s fiercely filtered by modern web browsers, so it’s safer to assume such telemetry won’t be available most of the time. If the user has an ad blocker or strict privacy filter on, it’s their preference that Heuri will honor.1

The other telemetry I’ll employ is business-specific telemetry that will be managed at the backend side: things like LLM evaluations, logical errors in user input, etc. This telemetry is much more crucial as it will provide better insight into an individual student’s progress.2

Having said that, I’m fully aware that such information is sensitive and must be treated with highest caution. That’s why security by design is a must, not a future hypothetical.

3.1. Minimalism

To be honest, I don’t have a detailed plan on what exactly I’ll be tracking as I don’t have the full picture yet. One thing I know for sure is that adding telemetry will be an opt-in process rather than an opt-out one. Collecting as much data as possible “just in case Heuri needs it” isn’t what I’d call transparent. I want to show my users that they can use a privacy-first service without feeling paranoia and have an informed understanding of why tracking happens and how specifically it benefits them.

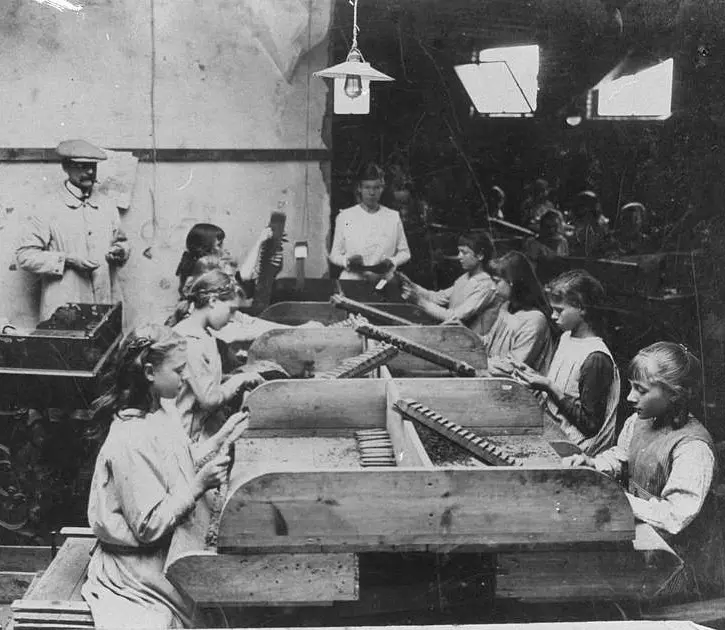

You shouldn’t have to hack your way through legalese to understand what data about you are collected and inferred. Image source: Picryl

You shouldn’t have to hack your way through legalese to understand what data about you are collected and inferred. Image source: Picryl

From a pedagogical point of view, I would like to track mistakes and strengths of users to allow for content personalisation: not only what to recommend but also how to present it. Many EdTech products like Brilliant or Duolingo don’t seem to adjust to student’s needs and hurl seas of structurally prebaked content at them. If that’s someone’s preferred way of learning, great, more power to them! With Heuri, I want to offer an alternative.

In my math tutoring practice, I learned that adjusting the difficulty and form of problems is exactly what keeps students engaged. Showering them with the same examples over and over again in hopes “they’ll get it sooner or later” only causes fatigue and passionate hate for the subject. I would love to transfer adaptiveness of my tutoring to Heuri, even though I’m fully aware it’s a very ambitious goal. Tell me, though, what feedback feels more inviting to you:

“Please try again”

or this:

“You already tackled X in a different context, perhaps this problem needs a rest. How about leaving it aside and taking a look at Y?”

3.2. Explainability

McKinsey notes that over 40% of business leaders see a lack of explainability as a key risk of AI, yet only 17% are addressing it. While explainable AI remains architecturally difficult to achieve, that doesn’t mean it’s impossible.3

Trust in AI doesn’t come from it being “smart” or “cute” or behaving like a person. It comes from UX that makes it transparent what the service needs AI for and how it’s using it. Tenacious adherence to explainability might also be one of the most effective ways of mechanising GenAI services, i.e. making them feel “cold”, as I’ve discussed in the previous post.4

Heuri should treat every AI failure as a moment to demonstrate integrity. A well-designed fallback, such as “I didn’t understand, can you rephrase?” provides much better detail on what’s happening and what is required from the user than a confidently presented misapprehension. It goes without saying that the user should be also able to flag incorrect or even harmful content and override automated actions when appropriate.

Even if a recommender system is confident and adamant that user may want to explore conic sections and the user wishes to learn every detail of an ellipse, Heuri should make it clear why it thinks so. One way of achieving this is through ‘Because you…’ labels in existing recommendation systems. The other popular way is providing more information and explanation in a tooltip that accommodates curious users seeking more detail and context.

To paraphrase a well-worn joke: LLMs make very fast, very accurate mistakes. Heuri should show and own them. Image source: Picryl

To paraphrase a well-worn joke: LLMs make very fast, very accurate mistakes. Heuri should show and own them. Image source: Picryl

4. Autonomy

Heuri sounds cute and all but don’t you consider it naive to develop an EdTech platform given how GenAI chatbots are already so versatile? How could a startup compete with enterprises of such enormous power and resources? Isn’t it already late?

Generalist GenAI products are typically designed for a global market with opaque behaviour and objectives optimised for engagement and scalability. By design, they are everything but a learning tool.

But that’s ridiculous! What do you mean “not suitable for learning”? All the chatbots have a learning guide mode and you can learn great stuff from them; besides, haven’t you heard of services like NotebookLM?

So-called ‘study modes’ are just fancy behavioural wrappers telling the language model to act like a tutor, without embedding actual pedagogical practices. It’s similar to the same overromanticised marketing present with the videogaming industry: just because you learn a thing or two playing Kingdom Come: Deliverance doesn’t mean education is the main purpose of the product and what the product should be used for. It just makes you feel better about yourself but it has nothing to do with really digging deep into learning.

Pedagogy-aware tools must encourage independence of thought, not amplify preexisting delegation of thinking to a tool. Even though we’re using natural language to create a dialogue, most GenAI tools are best suited for quickly solving a problem for its users. Great if you already know what you’re doing and you already have experience and just need to speed things up, a potentially harmful idea for a high school student. Research has shown that poor use of AI can lead to lower performance than before using it.

Heuri will try to combat prompt fatigue by introducing interactivity to the process of learning: showing choice chips, providing interactive playgrounds where a student can test their hypotheses, or facilitating collaboration to create cognitive links that help them name math concepts in their own language.

Shouldn’t we empower students instead of forcing them to the ways adults think is best? Image source: Picryl

Shouldn’t we empower students instead of forcing them to the ways adults think is best? Image source: Picryl

We also cannot force students to juggle and enhance their short-term memory. Their mathematical understanding should be solid and lasting. From my experience, as soon as a student stops asking questions, I know something’s off. Either they’ve completely dissociated from the topic or genuinely want to understand but don’t know how and what to ask. EdTech should encourage curiosity.

When preparing this article, I also came across a brilliant critique by Carl Öhman that touches on the importance of human cognition as well. Öhman illustrates this with a judicial system: practice of the court should be shaped by the present generation of judges, not just by an entity that interprets all cases through the lens of the past, since it cannot be trained on data from the future and arguably even the present.

The same goes for education: we shouldn’t build EdTech products that are a perfect emulation of the past pedagogical practices because we need to constantly adapt to the ever-changing challenges in education, artificial intelligence being just one tiny fraction out of all of them.

Wrapping It Up

To sum up, Heuri holds these four values:

- Respect: Heuri prioritizes user privacy by keeping data entirely internal and deliberately avoids anthropomorphizing its AI to prevent students from forming unhealthy emotional attachments.

- Novelty: The platform maintains engagement by delivering bite-sized, pedagogically sound challenges that dynamically adapt to the student’s individual level to support discovery and productive failure.

- Transparency: Heuri ensures users have full control over opt-in telemetry and prioritizes explainability by clearly communicating why the system makes specific recommendations or mistakes.

- Autonomy: Rather than simply solving problems for users, the platform employs interactive methods that encourage independent thought and natural curiosity to build lasting mathematical understanding.

-

There are definitely ways to bypass any ad blockers but that kind of defeats the value of transparency for me. ↩︎

-

This division of responsibilities is on purpose: UI tracking happens at the client side that might be compromised and is therefore always anonymised, business-critical tracking is specific to a user and must be therefore handled at the backend. ↩︎

-

Fortunately, there are already brilliant tools and frameworks that help us understand why a language model behaves the way it does, LIME and SHAP which are among the most prominent. I’ll provide more insights as soon as I start experimenting with either of them, as I’ve already noticed that standard “vanilla” models are very bad at what I need to achieve, as they are too generalist (duh!). ↩︎

-

By cold, I don’t imply that the platform will be indifferent and sterile. It’s rather about making it clear that the core engine of Heuri is still a language model that has no empathy, memory or understanding. ↩︎